Observability & Reliability in Legacy Modernization

Seeing and Trusting Modern Systems

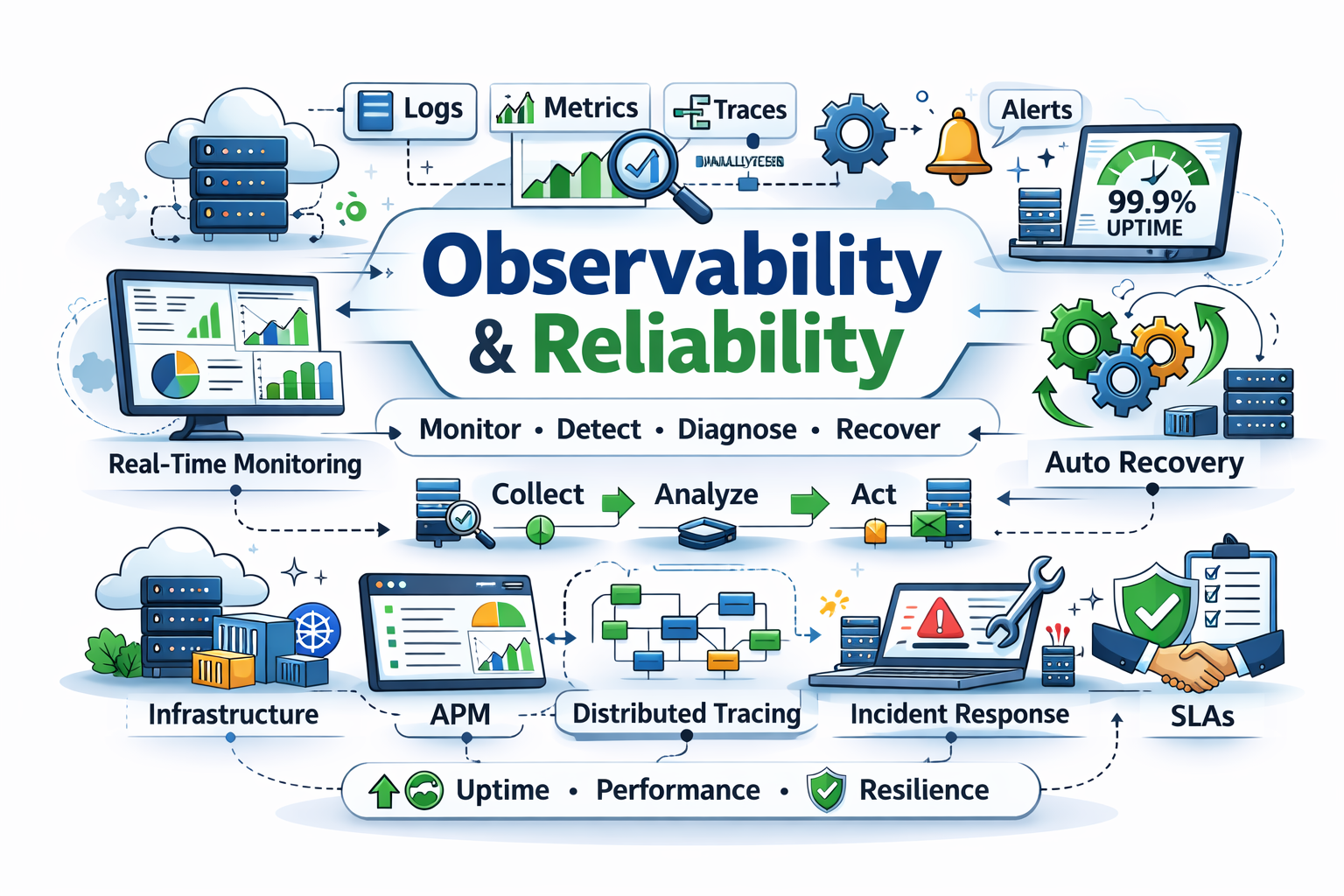

Modernization unlocks agility only if teams can see what’s happening in production and react before customers notice issues. Observability is the nervous system of your modernization program. Reliability practices ensure that nervous system triggers the right muscles in time. This article covers logging, metrics, traces, health checks, SLO/SLAs, error budgets, incident management, and chaos engineering—tempered for ecosystem constraints.

Observability Three Ways: Logs, Metrics, Traces

Logging Strategy

- Structured logging: JSON with correlation IDs, tenant IDs, and risk classification tags (PCI, PII) for redaction.

- Centralized ingestion: Kafka or managed log pipelines feeding Splunk, ELK, or Datadog.

- Retention tiers: hot (7-14 days) for investigations, cold (90+ days) for compliance audits.

- Privacy controls: field-level masking, tokenization, and DLP scans.

- AI-powered log summarization: LLMs cluster repetitive messages and highlight anomalies.

Metrics Strategy

- Golden signals: latency, traffic, errors, saturation per service.

- Business metrics: approvals per minute, failed transfers, fraud alerts.

- High-cardinality handling: exemplars, label hygiene, and metrics pipelines (Prometheus + Cortex/Thanos).

- Cost telemetry: track per-service cloud spend to correlate reliability with economics.

Distributed Tracing

- Trace propagation: use W3C Trace Context across services.

- Sampling: dynamic sampling (tail-based) to capture rare errors.

- Service maps: visualize dependencies to inform modernization sequencing.

- AI trace clustering: models highlight common latency contributors.

Health Checks & Readiness Probes

- Liveness probes detect stuck threads or deadlocks.

- Readiness probes ensure services only receive traffic after warming caches or syncing with legacy systems.

- Dependency health: each probe validates downstream dependencies (DB, queues, third-party APIs).

- Regulator transparency: log probe results for resiliency reporting.

SLOs, SLIs, and SLAs

- SLO (Service Level Objective): target reliability (e.g., 99.9% successful transfers).

- SLI (Service Level Indicator): metric measuring outcome (e.g., ratio of successful / total transfers).

- SLA (Service Level Agreement): contractual commitment with penalties.

Building SLOs

- Segment services by tier (core banking vs marketing microsite).

- Define customer journeys: payment submission, loan approval.

- Establish SLIs: request latency, error rate, settlement success.

- Set objectives aligned to business risk.

- Monitor with burn-rate alerts.

Error Budgets & Governance

Error budgets quantify how much unreliability you can afford before shipping slows.

- Budget policy: if burn rate exceeds 2x, freeze feature releases until stabilized.

- Visualization: dashboards showing consumption vs target, annotated with releases.

- AI forecasting: models predict future burn based on current incidents + planned changes.

- CAB integration: change approvals require current budget status.

Incident Management

Detection

- Alert taxonomy: critical (Tier 0), high, medium, informational.

- Multi-signal detection: combine metrics, logs, traces, synthetic monitors.

- AI noise reduction: event correlation reduces duplicate alerts by clustering similar symptoms.

Response

- Runbooks: markdown + automation scripts triggered via ChatOps.

- Incident command system: roles (commander, comms, scribe) with training.

- War rooms: Slack/Teams channels templated with checklists.

- AI copilots: summarize timeline, propose hypotheses, generate status updates.

Post-Incident Reviews

- Blameless culture: focus on systemic fixes.

- Structured templates: timeline, impact, contributing factors, remediation.

- Action tracking: Jira or Linear tasks linked to SLO dashboards.

- AI assistance: generate drafts from incident channel transcripts.

Chaos Engineering

- Game days: simulate region loss, dependency failure, or mainframe outage.

- Guardrails: start in staging, graduate to production with limits.

- Financial data protection: mask PII in chaos experiments.

- Observability validation: ensure experiments trigger expected alerts.

- Regulator briefing: share chaos results proving resilience.

AI-Powered Observability

💡 AI Assist Pattern

Use an AI-assisted analyzer (LLM + vector context from repos, tickets, and runtime traces) to surface modernization candidates automatically. Feed architecture rules, past incidents, cost telemetry, and code smells into the prompt so the model proposes risk-ranked remediation steps instead of generic advice.

Extend this to observability:

- Anomaly detection: unsupervised models on metrics to catch deviations.

- Root-cause analysis: LLMs correlate log clusters with deployment diffs.

- Capacity forecasting: predictions on queue depth, CPU, and storage growth.

- Narratives for regulators: AI drafts reliability reports referencing SLO data.

Observability Platform Blueprint

Reliability Engineering Playbooks

- Service onboarding: Observability checklist before go-live (metrics, logs, traces, SLOs, runbooks).

- Production readiness reviews: verify alerts, chaos tests, rollback plans.

- Release guardrails: pipeline steps verifying SLO budget status.

- Reliability roadmap: quarterly plan for toil reduction, automation, debt payoff.

BFSI Case Study: Global Payments Processor

- Challenge: Frequent latency spikes in ACH payments causing SLA credits.

- Observability modernization: implemented OpenTelemetry across Golang services, replaced syslog dumps with structured JSON, and correlated queue depth with partner bank latency.

- Reliability practice: defined payment submission SLO (99.95% < 600ms), introduced burn-rate alerts integrated into CAB.

- AI: anomaly detection on settlement rates flagged regional telecom outages 30 minutes before customer complaints.

- Outcome: SLA breaches dropped 80%, quarterly regulator report auto-generated from SLO dashboards.

BFSI Case Study: Retail Banking Mobile App

- Legacy state: monitoring based on synthetic pings, no traceability.

- Modernization: instrumentation via OpenTelemetry, AppDynamics RUM, and serverless trace ingestion.

- Incident response: ChatOps bots compile timeline + call graph for on-call engineer; feature flags disable problematic modules.

- Chaos: monthly failover of mobile gateway to DR region validated by regulators.

- Outcome: App store rating improved from 3.8 to 4.6, MTTR dropped from 90 minutes to 12, and regulators noted improved transparency.

Continuous Verification & AIOps

- Canary verification: AI compares canary vs baseline metrics, deciding promotion automatically.

- Log anomaly guardrails: pipeline halts if new release introduces unfamiliar log clusters.

- Business KPI checks: confirm loan approvals or card activations stay within variance.

- Zero-touch rollbacks: AI triggers rollback when burn rate predicted to exceed budget before time window ends.

Compliance & Audit Readiness

- Retention policies: align log/metric storage with SOX, PCI, GDPR.

- Access controls: RBAC per tenant, integrate with IAM, record viewer logs.

- Evidence packs: monthly snapshots of monitoring configuration, SLO reports, and incident logs.

- Regulator dashboards: curated read-only views showing uptime, failovers, and chaos test results.

Telemetry Governance Council

BFSI organizations benefit from a telemetry governance forum that includes SRE, security, compliance, and product leaders.

- Data catalog: register every metric/log/trace stream with ownership and retention policies.

- Access reviews: quarterly checks ensuring least-privilege access to observability tools.

- Tagging standards: enforce labels for business line, criticality, customer region to support regulator queries.

- Cost optimization: monitor telemetry spend; use AI to recommend downsampling or archival.

Synthetic Monitoring & Customer Journeys

- Synthetic probes: script high-value flows (loan application, FX transfer, card activation) from major geographies.

- Device diversity: emulate mobile networks and legacy browsers still common in banking.

- Alert strategy: treat synthetic failures as incident triggers only when correlated with backend signals.

- AI summarization: translate synthetic results into plain-language customer impact for executives.

Capacity & Resiliency Modeling

- Chaos combined with load: run chaos experiments during quarterly load tests to ensure resilience under stress.

- What-if simulations: AI models predict impact if a clearing partner goes offline or if market volatility triples traffic.

- Regulatory stress tests: align with annual climate/market stress programs, showing technology readiness.

BFSI Example: Securities Trading Platform

A brokerage running real-time equities trading needed sub-second insight into order routing.

- Observability: Deployed eBPF-based tracing to capture system calls and kernel latency.

- Reliability: Defined per-exchange SLOs, mapped to regulatory trade reporting requirements.

- AI: Real-time anomaly detection flagged order imbalance at a partner exchange, triggering auto-failover.

- Outcome: Met SEC Rule 613 timelines, avoided $1.2M in potential fines, and improved trader satisfaction scores.

Incident Simulation Program

- Monthly mini-scenarios: 30-minute exercises focusing on a single failure mode (e.g., certificate expiry).

- Quarterly large-scale drills: multi-team scenario with external partners and regulators observing.

- Scorecards: measure detection time, communication clarity, and runbook accuracy.

- AI playback: generate video-style reenactments from chat logs for training.

Reliability Organization Design

- Central SRE guild: defines standards, builds tooling, mentors domain teams.

- Embedded SREs: sit with product squads during modernization waves.

- Reliability champions: business representatives ensuring customer commitments remain visible.

- Career paths: tie promotions to SLO ownership and incident improvement.

AI-Augmented Knowledge Base

- Vector search: index runbooks, past incidents, ADRs, and regulator feedback.

- Chat interface: responders ask “Have we seen this payment timeout before?” and receive context.

- Auto-tagging: AI labels incidents with domains and root causes for better analytics.

- Continuous learning: insights feed backlog prioritization for modernization epics.

BFSI Example: Treasury Risk Analytics

Treasury teams run Monte Carlo simulations nightly. Observability ensured GPU clusters stayed healthy.

- Metric mix: GPU utilization, job queue depth, VaR results variance.

- Alerting: anomaly detection on VaR outputs triggered investigations before regulatory reports.

- Reliability: redundant job schedulers with failover tested monthly via chaos.

- Result: Basel III submissions delivered on time for six consecutive quarters, with documented observability evidence.

Executive Dashboards & Storytelling

- Single-page view: uptime, top incidents, SLO status, error budget burn, and customer impact.

- Narrative mode: AI drafts weekly updates summarizing reliability posture in business language.

- Trend analysis: highlight modernization milestones and corresponding reliability gains.

- Regulator-ready exports: PDF/CSV snapshots signed and archived for audits.

Action Plan

- Inventory current observability gaps per service; map to modernization timeline.

- Standardize instrumentation (OpenTelemetry, JSON logging, metric naming conventions).

- Define SLOs/SLIs for Tier 0/1 services with business stakeholders.

- Implement error budgets and burn-rate alerts integrated with release governance.

- Automate incident response (ChatOps, runbooks, AI summaries).

- Launch chaos program with clear guardrails and compliance oversight.

- Build observability platform pipelines with cost-aware storage policies.

- Generate regulator-ready reports from observability data monthly.

Looking Ahead

Observability ensures we can trust new systems. Next, we’ll modernize data foundations—schema evolution, CDC, event sourcing, and analytics enablement with AI copilots.

Legacy Modernization Series Navigation

- Strategy & Vision

- Legacy System Assessment

- Modernization Strategies

- Architecture Best Practices

- Cloud & Infrastructure

- DevOps & Delivery Modernization

- Observability & Reliability (You are here)

- Data Modernization

- Security Modernization

- Testing & Quality

- Performance & Scalability

- Organizational & Cultural Transformation

- Governance & Compliance

- Migration Execution

- Anti-Patterns & Pitfalls

- Future-Proofing

- Value Realization & Continuous Modernization